Going places in the deepdream

Google recently open sourced their code for visualising the features learned by neural networks for image classification (blog post). An excellent video was made that visualised the features learned at each layer of the network. I've made the equivalent video for the same network but trained on the Places Database instead of ImageNet. I also went down the layers and reversed the video to get a zoom out effect. My code is online. P.S. Sorry for only 480p quality. I was running this on my laptop which 'only' has a GeForce 840M GPU.

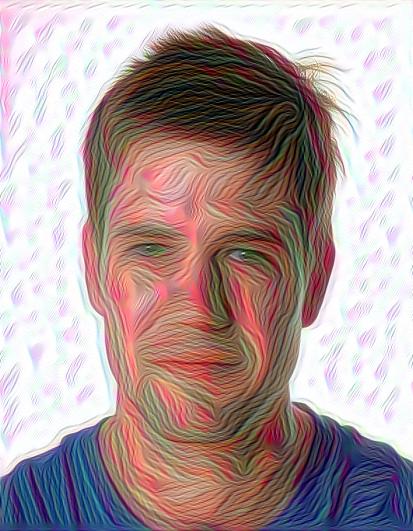

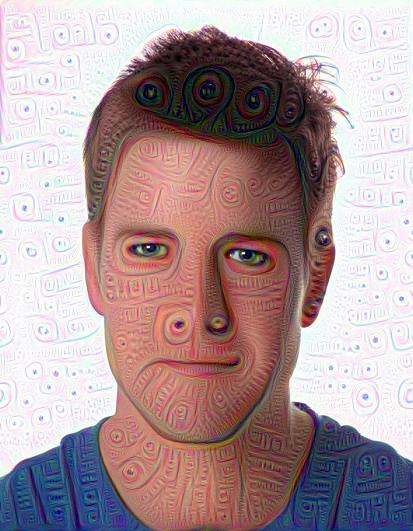

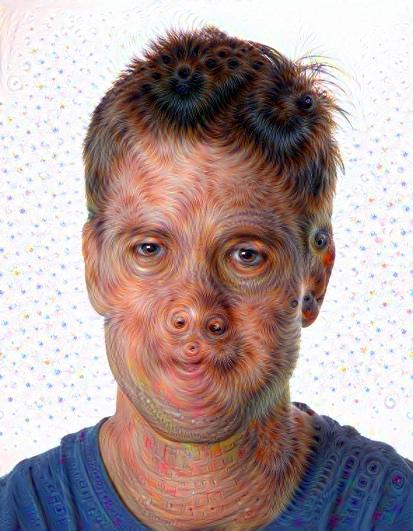

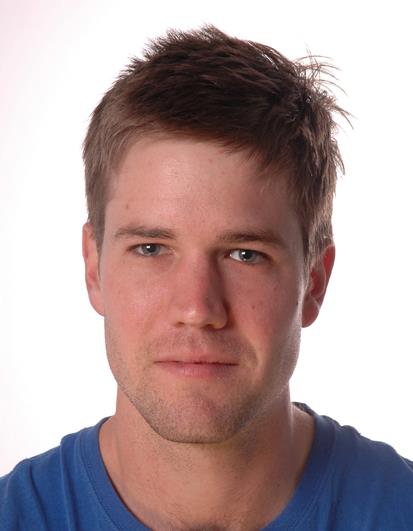

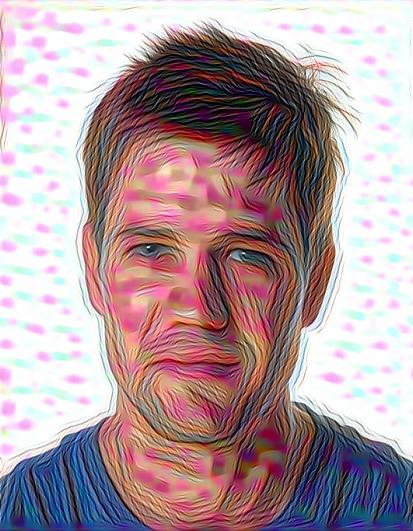

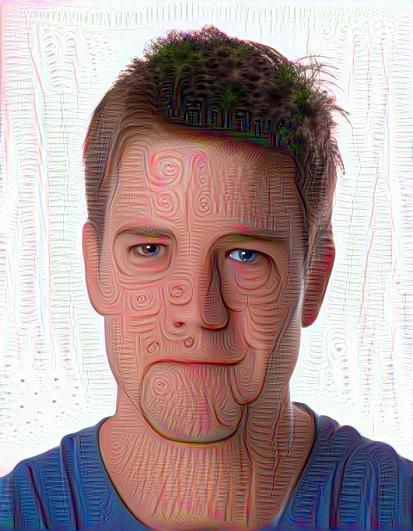

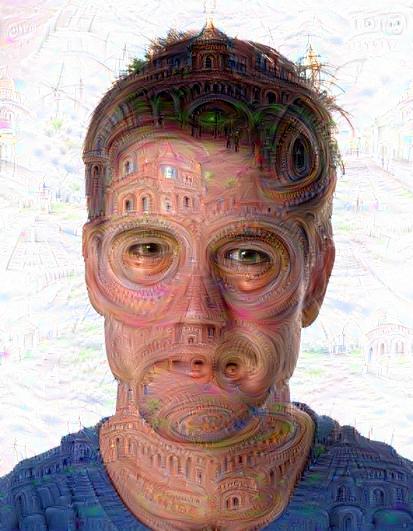

I also had some fun with exploring the differences between the ImageNet and Places trained networks when applied to static images. I found Rowan McAllister's face to be particularly amenable to this exploration. Below is Rowan and his ImageNet deep dream counterparts as we ascend the layers of the network. As many have already noted, we start with enhancing geometric features, and then everything turns into dogs and eyes.

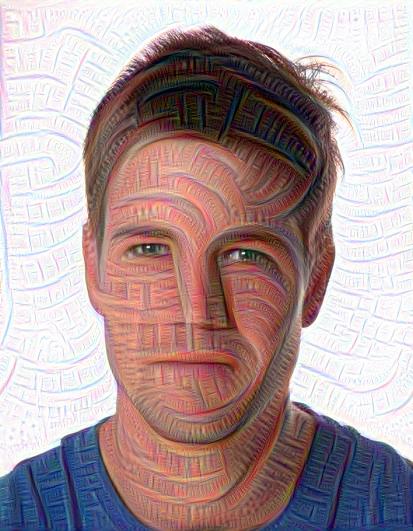

Let's contrast this with Places deep dreaming below. Again we start with geometric features, but at higher levels different geometries are enhanced. In particular, this network likes spirals and concertric circles. And eventually, everything turns into buildings!

That's all for now. Check out my code and let me know if you do anything cool.